Practice Experience Management Software

Unleash Your Practice's Full Potential with Practice Experience Management (PXM)

Experience the PXM revolution: Seamlessly unite patient care and practice management into one integrated system—driving greater patient engagement, superior outcomes, and sustainable practice growth.

Why PXM—and why now?

Rehab therapy has undergone a significant transformation. Skyrocketing demands from patients and payers have burned out practices and eroded their business margins to a breaking point.

On top of these mounting pressures, the rehab therapy software industry has become fractured into point solutions or false all-in-one platforms.

There is no modern, unified software platform, and the existing, disconnected experience is a barrier to increasing patient retention, optimizing outcomes, and improving business margins.

We think the industry deserves a better way—and we call it Practice Experience Management (PXM).

.png)

What is WebPT’s role in the PXM model?

In 2008, WebPT revolutionized the rehab therapy industry by introducing the first-ever cloud-based EMR. Since then, WebPT has continued to lead the industry, improving the most-trusted EMR for rehab therapy while adding solutions for marketing, front office, and billing that make it easier for practices to improve care and grow their business.

Today, WebPT is innovating once again. To meet the growing demands of clinicians and patients, it’s expanding the WebPT platform into a comprehensive ecosystem of solutions, data, and insights designed to seamlessly manage every aspect of rehab therapy practice—becoming the first-ever Practice Experience Management (PXM) platform for the industry.

What Can WebPT's PXM Platform

Do For Your Practice? Everything.

Powerful Central Administration and Security

Rest assured—your clinical data is safe with us.

Engage

Increase patient satisfaction, reduce administrative costs, and improve patient retention with powerful, personalized touch points that build loyalty and trust at every stage of the patient journey.

.png)

Care

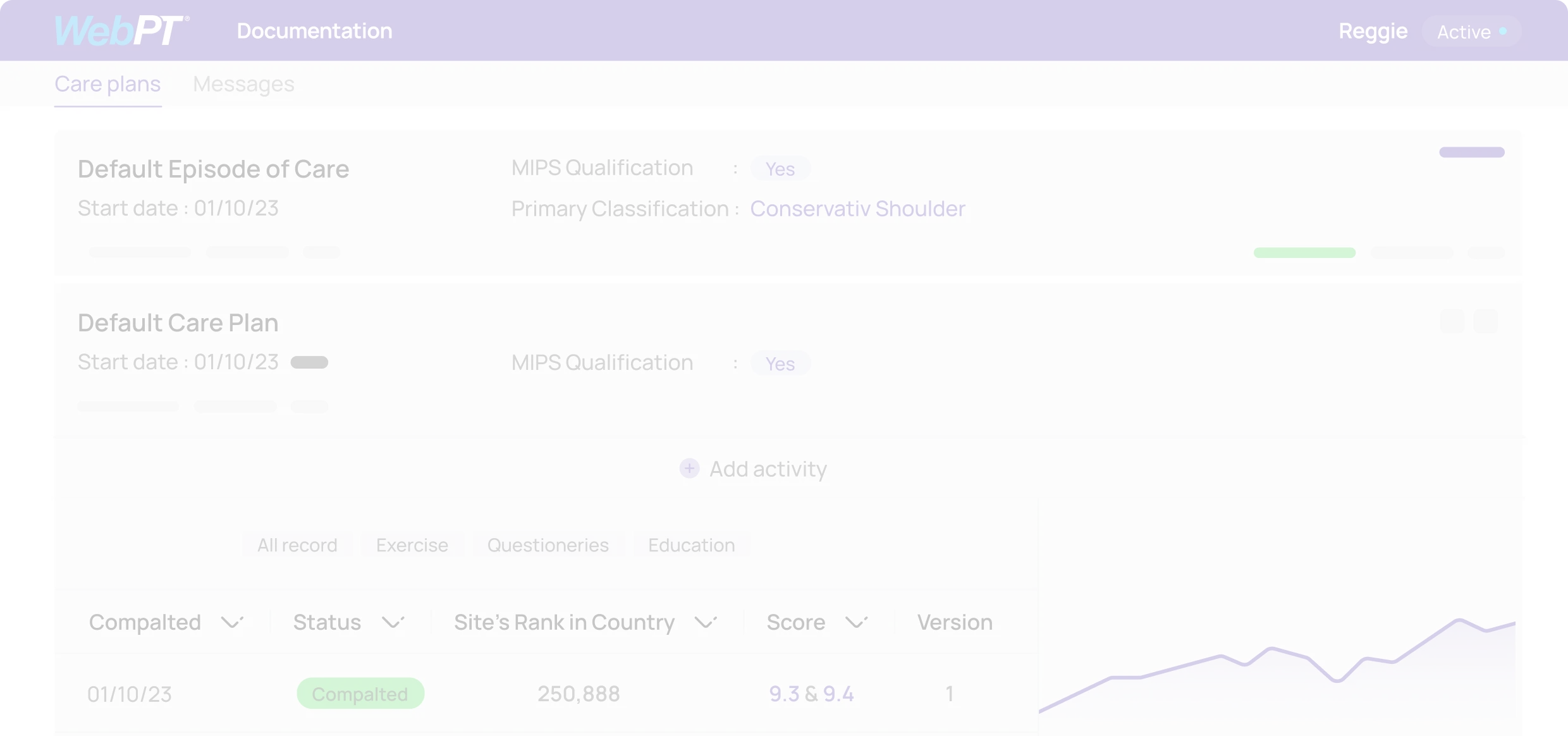

Enhance clinical productivity and outcomes—without sacrificing the patient experience or your staff’s well-being—with an EMR and front-office solutions designed specifically for rehab therapy workflows

.png)

Monetize

Take control of your practice's financial success and watch your revenue soar to new heights by streamlining billing processes, reducing claim denials, optimizing reimbursements, and maximizing revenue capture like never before.

Practice Intelligence Data and Analytics Insights

Let data drive your decision-making.

Learn how WebPT’s PXM Platform can catapult your practice to new heights.

Get Started

"It just made sense: no server, no IT, no updates to buy, Web-based, compliant, simple interface, and constant new features"

Awards